Ben recently made the following observations on BlueSky, and we thought they were interesting enough to share with all of you: I stumbled across something interesting during a live demo in yesterday's webinar. Not having anything prepared, I tried a page in the Queens University Archives' Riel Resistance Collection. The hand was extraordinarily difficult for … [Read more...] about AI With Historical Instincts?

Uncategorized

How Good HTR is Changing What & How We’re Transcribing

In a recent discussion on BlueSky, Ben and Scott Weingart (and others) had a conversation about the explosion of possibilities that might come from good, cheap, AI transcription. Scott asks “how the bias of the spotlight of what’s searchable will reshape how people will engage with the past. That is, how will history be remembered and written in new ways, and privileging what … [Read more...] about How Good HTR is Changing What & How We’re Transcribing

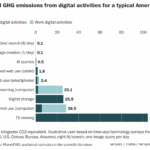

Environmental Impact of AI

At the Society of American Archivists conference last August, I joined a panel on artificial intelligence. The first question from the audience came quickly and, frankly, with a little heat: "How do you justify using energy-intensive tools like AI when the climate crisis is only getting worse?" That moment stuck with me. The archivists in the room were being conscientious—a … [Read more...] about Environmental Impact of AI

How Do You Know Whether AI Is "Good Enough"?

Yesterday we deployed two new features to help you evaluate Gemini 3 (and eventually others) results against human transcribed or corrected text. First, we’ve developed a comparison screen that shows the differences between an AI generated page transcription and human created ground truth: Next, we calculate statistics, again comparing the AI draft against the human … [Read more...] about How Do You Know Whether AI Is "Good Enough"?

Introducing Gemini 3.0 Support in FromThePage

When Ben sent me Mark Humphries’ report on testing a new, unreleased Gemini model, I got scared. And excited. Mark is a historian and digital humanist who’s gone deep on analyzing AI tools for textual transcription. He understands the dangers of “seductive plausibility” in LLM outputs. He knows what researchers and historians need from archival text. He measures errors … [Read more...] about Introducing Gemini 3.0 Support in FromThePage