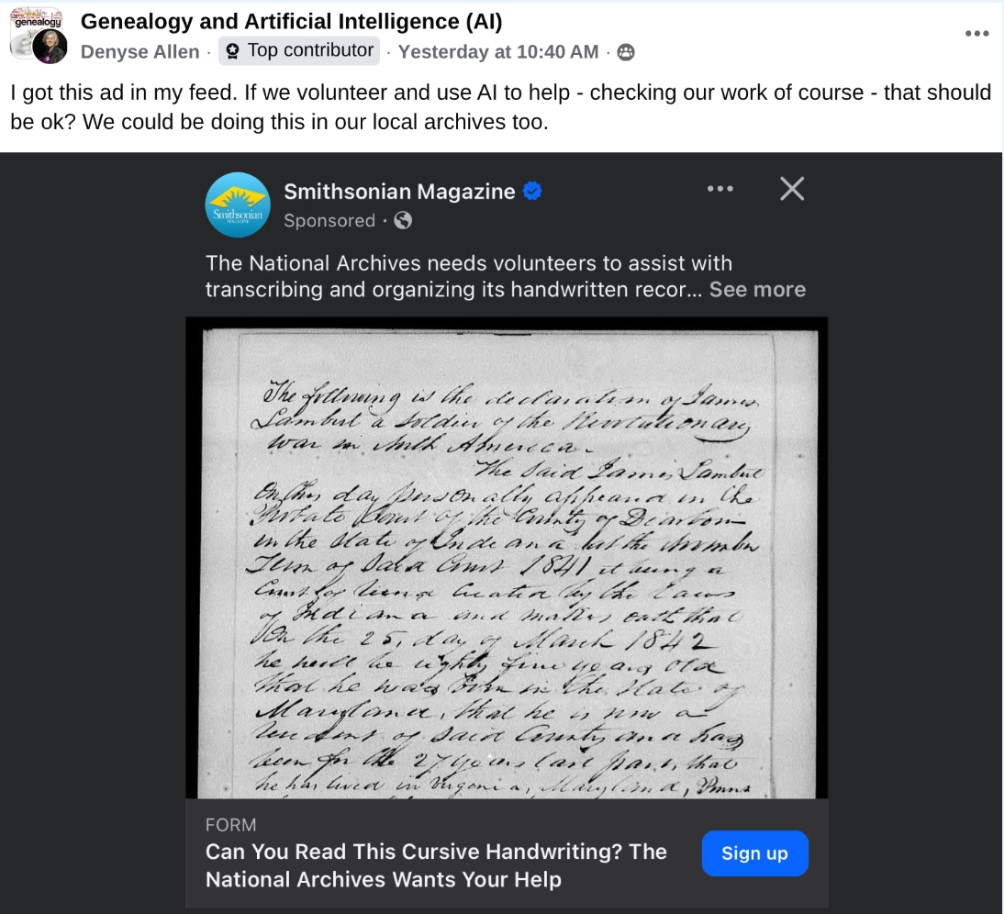

Last month, Denyse Allen asked this question on the Genealogy and AI Facebook group:

If volunteers use AI to transcribe documents, is that OK?

I have strong opinions, but want to explain them.

First off, the institutions running big crowdsourcing projects have staff who can automate sending all of their documents to AI engines for transcription. As a software developer, it makes me wince to think of humans cut-and-pasting images into LLM services, then pasting the results back into crowdsourcing platforms, when a computer program could do the same thing. What a waste of time!

That said, there is a much more important reason that libraries and archives ask humans to transcribe text: transcriptions made by humans are fundamentally different from ones made by machines.

Three kinds of transcription

When humans transcribe documents, the resulting transcript usually has these qualities:

- Verbatim spelling – for most projects, each word will be transcribed letter-for-letter, retaining any irregularities from the original

- High accuracy – humans are really good at reading handwriting, learning from context and returning to correct readings they got wrong after they master a particular hand or script.

- Willingness to say “I don’t know” – humans are willing to write things like “[illegible]” or “[unclear: possibly Jones?]” when they aren’t certain about a text.

Traditional OCR/HTR systems like Kraken or Transkribus also produce transcripts with verbatim spelling, but their accuracy can be lower than humans and they usually produce gibberish if they encounter difficult words. But it’s easy to tell that these transcripts were machine-produced, because their errors are so characteristic.

I suspect that the person asking the question was using a Multi-modal Large Language Model like ChatGPT or Google Gemini. Those systems are very exciting, and really easy to use, but the transcripts they produce have very different qualities:

- Regularized spelling – since LLMs are language models, they tend to prioritize readings that make sense. As a result, many of them silently normalize irregular words.

- Uneven accuracy – in the case of “filler words” like prepositions and articles, LLMs don’t even “look at” the image, reverting to their “autocomplete on steroids” behavior to just add words that make sense given the context.

- Overconfidence – if an LLM can’t read something, it will fill in words that make sense to it. This means that you can’t tell when the transcription is accurate, and when it’s just a guess.

So what happens if an archivist sees a transcription that is supposed to be created by a volunteer, but was actually produced by an LLM? Unfortunately, the transcript will make sense – the seductive plausibility of LLM output can easily trick readers–even experienced ones–into believing that the text faithfully reproduces the original. But what if the volunteer checks the AI output before contributing it? Spot-checking LLM text can be fiendishly difficult, and requires special techniques to reduce bias.

This is not going to be an easy challenge for those of us who run crowdsourcing projects. We need to explain the goal to volunteers, persuade them to avoid AI short-cuts, and build responsible (and traceable) AI tools into our software platforms.